Data scraping is the process of importing information or data from a website and displaying it in a spreadsheet or a local file on your computer. We will be exploring how one can scrape data from social media sites, particularly Twitter. We will also learn the same for Reddit using its official API. Lastly, we will learn how to generally scrape the content in web pages and pictures from different web pages as well. So, let’s get started!

Prerequisites

Before you go ahead, please note that there are a few prerequisites for this tutorial. You should have some prior basic knowledge of Machine Learning, as well as basic programming knowledge in any language (preferably in Python). We will be using Jupyter Notebook for writing our code. If you do not already have it installed, visit Jupyter Notebook or work on any other code editor of your liking.

1. Scraping tweets from Twitter using Twint

There are a number of ways to scrape tweets from Twitter. You can do so using the Twitter API but a shortcoming of this is that it limits the number of tweets that can be scraped. Manually scraping the tweets is also one option but requires unnecessary time and effort. This is why we will be using Twint to collect our tweets from Twitter. Twint is a tool that allows you to scrape tweets on different basis e.g. the tweets of a particular user, tweets containing a particular keyword, tweets that are tweeted after or within a certain time, etc.

Installations

You can install Twint by typing the following command in your terminal

pip install twint

Scraping Twitter tweets using Twint

Scraping tweets of a particular user

import twint

config = twint.Config()

# Search tweets tweeted by user 'BarackObama'

config.Username = "BarackObama"

# Limit search results to 20

config.Limit = 20

# Return tweets that were published after Jan 1st, 2020

config.Since = "2020-01-1 20:30:15"

# Formatting the tweets

config.Format = "Tweet Id {id}, tweeted at {time}, {date}, by {username} says: {tweet}"

# Storing tweets in a csv file

config.Store_csv = True

config.Output = "Barack Obama"

twint.run.Search(config)

Output:

Tweet Id 1261004586359422979, tweeted at 18:44:56, 2020-05-14, by BarackObama says: Vote.

Tweet Id 1260955716644470784, tweeted at 15:30:44, 2020-05-14, by BarackObama says: Michelle and I want to do our part to give all you parents a break today, so we’re reading “The Word Collector” for @chipublib. It’s a fun book that vividly illustrates the transformative power of words––and we hope you enjoy it as much as we did. pic.twitter.com/ADYbL6Dzg4

Tweet Id 1260707691900612615, tweeted at 23:05:11, 2020-05-13, by BarackObama says: Despite all the time that’s been lost, we can still make real progress against the virus, protect people from the economic fallout, and more safely approach something closer to normal if we start making better policy decisions now. https://www.vox.com/2020/5/13/21248157/testing-quarantine-masks-stimulus …

....

Scraping tweets with a particular keyword

import twint

# Configure

config = twint.Config()

# Search tweets that mention Taylor Swift

config.Search = "taylor swift"

# Limit search results to 10

config.Limit = 20

# Return tweets that were published after Jan 1st, 2020

config.Since = "2020-01-1 20:30:15"

# Formatting the tweets

config.Format = "Tweet Id {id}, tweeted at {time}, {date}, by {username} says: {tweet}"

# Storing tweets in a csv file

config.Store_csv = True

config.Output = "Taylor Swift"

twint.run.Search(config) 2. Scraping Reddit using Reddit API

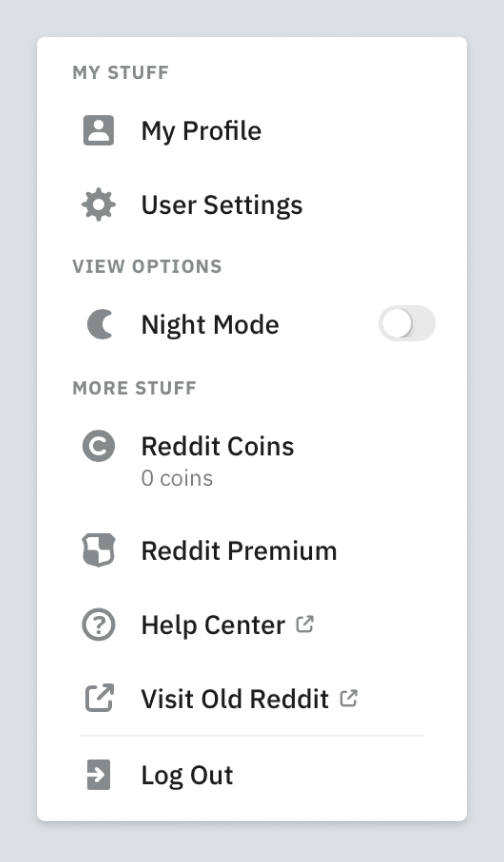

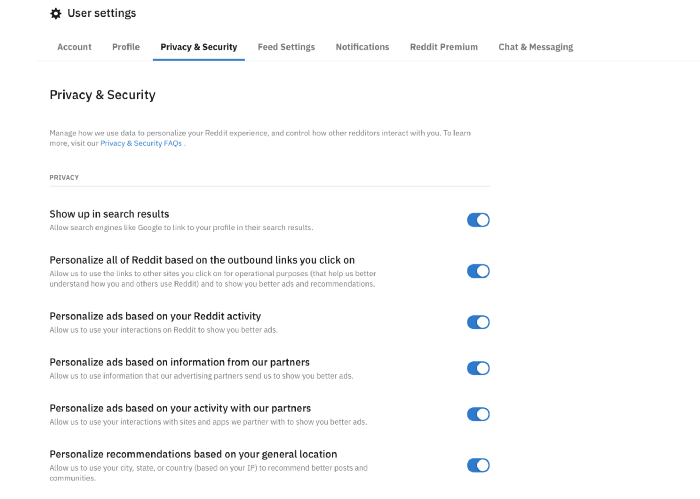

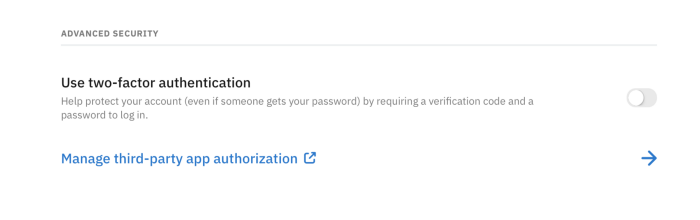

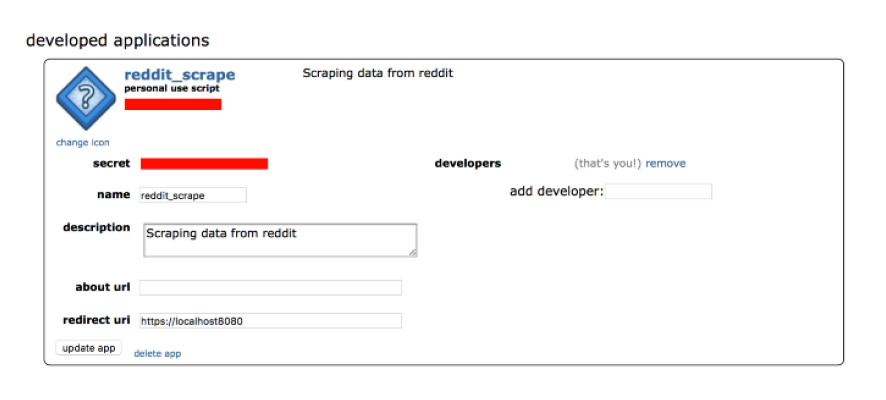

We will be scraping donation requests made on Reddit by using the official Reddit API. To access it, you need to:

- Go to the official Reddit website

- Log into your Reddit account or create a new one

- Go to User Settings

4. Go to Privacy and Security

5. Go to App authorization

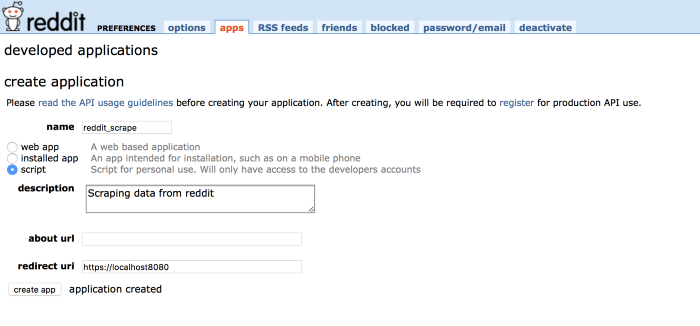

7. Create a name for your application and fill in the other relevant credentials. In redirect URL, put the URL of your localhost.

8, Click on ‘create app’

Installations

We will be using a Python framework named Praw to easily use the Reddit API. To install it, run the following command in your terminal:

pip install praw

Python Code

import praw

import pandas as pd

import numpy as np

# Fill in your own credentials for client_id, client_secret and user_agent. Characters in'Personal use script' make your client_id, those in 'secret' make client_secret and user_agent is the name of your application.

reddit = praw.Reddit(client_id = '',

client_secret = '',

user_agent = '')

# Get posts from the subreddits related to donations

hot_post_1 = reddit.subreddit ('donate').hot(limit = 10)

hot_post_2 = reddit.subreddit ('Assistance').hot(limit = 10) # Offers

hot_post_3 = reddit.subreddit ('Charity').hot(limit = 10)

hot_post_4 = reddit.subreddit ('Donation').hot(limit = 10)

hot_post_5 = reddit.subreddit ('gofundme').hot(limit = 10) # lots of categories

hot_post_6 = reddit.subreddit ('RandomKindness').hot(limit = 10)

hot_post_7 = reddit.subreddit ('donationrequest').hot(limit = 10 )

# Saving donation posts in an empty list

posts = []

for post in hot_post_1:

posts.append ([post.title, post.score, post.id, post.subreddit, post.url, post.num_comments, post.selftext, post.created])

for post in hot_post_2:

posts.append ([post.title, post.score, post.id, post.subreddit, post.url, post.num_comments, post.selftext, post.created])

for post in hot_post_3:

posts.append ([post.title, post.score, post.id, post.subreddit, post.url, post.num_comments, post.selftext, post.created])

for post in hot_post_4:

posts.append ([post.title, post.score, post.id, post.subreddit, post.url, post.num_comments, post.selftext, post.created])

for post in hot_post_5:

posts.append ([post.title, post.score, post.id, post.subreddit, post.url, post.num_comments, post.selftext, post.created])

for post in hot_post_6:

posts.append ([post.title, post.score, post.id, post.subreddit, post.url, post.num_comments, post.selftext, post.created])

posts = pd.DataFrame (posts, columns = ['title', 'score', 'id', 'subreddit', 'url', 'num_comments', 'body', 'created'])

#posts

df = pd.DataFrame (data = posts)

dataframe = df.to_csv (r'donations.csv', index = False)

# Data Processing

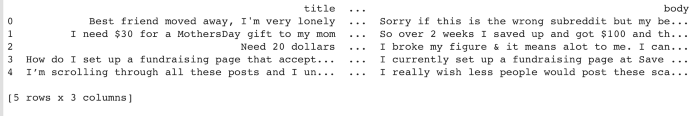

df = pd.read_csv ('donations.csv')

df = df.drop (['id', 'subreddit', 'num_comments', 'url', 'created'],1)

df = df[['title', 'score','body']]

print (df.head ())

print(df.shape)

# Saving donation posts to a csv file

dataframe = df.to_csv (r'donations.csv', index = False)

Output:

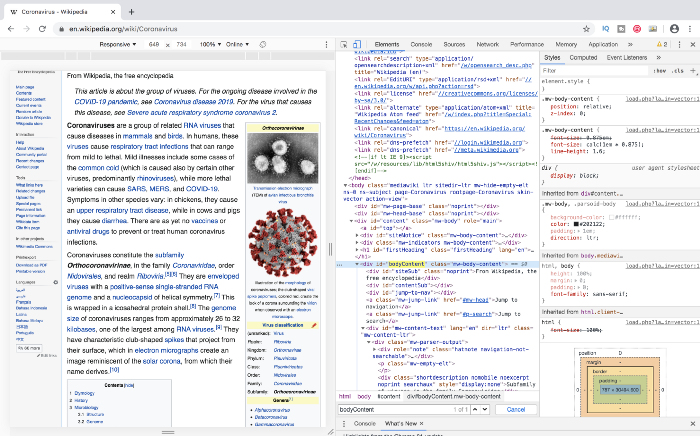

3. Scraping contents of a web page

We will be scraping the text content of a Wikipedia page about Reddit using a simple and powerful Python library named BeautifulSoup. It is also important for you to be familiar with some of the basics of HTML for web scraping. First, right-click and open your browser’s inspector to inspect the webpage. Hover your cursor on the desired section whose content you want to scrape, and you should be able to see a blue box surrounding it. If you click it, the related HTML will be selected in the browser console. The section that we wish to scrape is a div that contains the entire text within the page.

Installations

To install BeautifulSoup, run the following command in your terminal:

pip install BeautifulSoup4

Python Code

# import libraries

import urllib

from bs4 import BeautifulSoup

# specify url of webpage whose content you need to scrape

url = "https://en.wikipedia.org/wiki/Coronavirus"

request = urllib.request.Request (url)

# query the website and return the html of the webpage

response = urllib.request.urlopen (request)

# parse the html using beautiful soup

var = BeautifulSoup (response,'html.parser')

# Take out the <div> and get its value

text_box = var.find ('div', attrs = {'id': 'bodyContent'})

text = text_box.text.strip ()

print (text)

Output:

From Wikipedia, the free encyclopedia Jump to navigation Jump to search This article is about the group of viruses. For the ongoing disease involved in the COVID-19 pandemic, see Coronavirus disease 2019. For the virus that causes this disease, see Severe acute respiratory syndrome coronavirus 2. Subfamily of viruses in the family Coronaviridae Orthocoronavirinae Transmission electron micrograph (TEM) of avian infectious bronchitis virus Illustration of the morphology of coronaviruses; the club-shaped viral spike peplomers, colored red, create the look of a corona surrounding the virion when observed with an electron microscope.

4. Scraping images

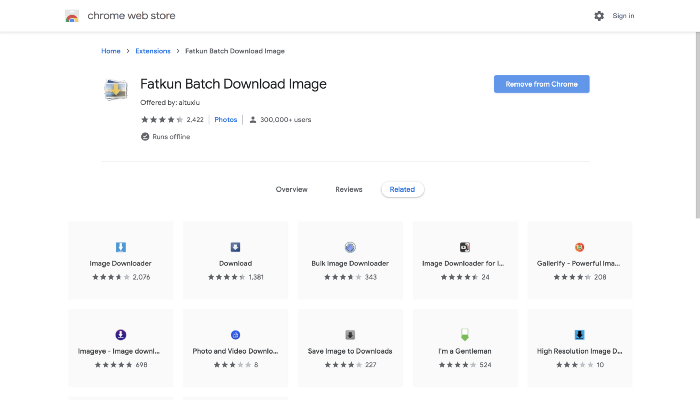

We will be scraping images in batch through the Fatkun Batch Download Image extension.

Prerequisites

You will be needing Google Chrome Browser along with Fatkun Batch Download Image extension.

Steps:

- After you are finished with the installation, search for the website and the pictures that you want to download

- Click on the extension’s icon

- Now an extension will get opened which would display a new tab showing all images that have been detected by it. All the pictures that appear on the extension’s tab by default have opted for the purpose of download. After making the choice, click on ‘save image’.

- The extension would now provide you with the warning and will ask where to save the file before it is being downloaded and you have to give the confirmation for each image.

- The extension would create for you a new folder based on the title of the website and there you could download all the desired images. You could even click on ‘more options’ so that with the aid of link you could simply filter the images, rename and sort them as per size.

While crawling presents easy access to many web-based data collections, most times, such data also accompanies heavy noises and contaminations to be used as a dataset right away. Therefore, companies or researchers need to devote heavy efforts in quality controlling; having enough human resources is always a great challenge. Therefore, it is often more efficient to find another service that does laborious works (including both collection and preprocessing) for you. For that, we could be your perfect solution!

Here at DATUMO, we crowdsource our tasks to diverse users located globally to ensure the quality and quantity simultaneously. Moreover, our in-house managers double-check the quality of the collected or processed data! Check us out at datumo.com for more information!